Your agents don't read the middle

Context Drift is a good name for it. A paper published last week scanned 723 repositories with agent instruction files and found over half a million constraint violations. The authors define drift as agents silently deviating from instructions due to context limitations. The mechanism they're pointing at is real, but I think the frame is incomplete. Drift has a deeper cause than context limitations. Attention distributes unevenly across a set of rules, and some of those rules shouldn't be there anymore.

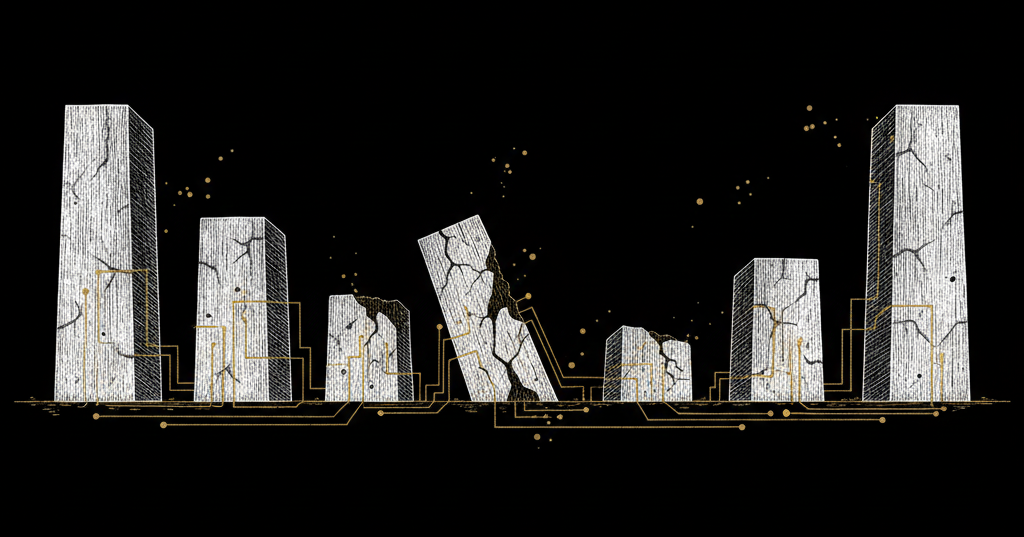

LLMs have a well-documented attention pattern. The beginning and end of the context window get disproportionate weight. The middle fades. If you've ever wondered why your agent follows the first three rules in your CLAUDE.md religiously and seems to forget rule fourteen by the time it's writing its third file, this is the mechanism. A rule in the middle of a long session isn't off. It's dim - the model is still attending to it, but with less weight than it gives the rules near the edges. The longer the session runs, the dimmer those middle rules get. Rules also go stale over time, and a stale rule in the attentional dead zone is the worst combination. It's wrong, and the model isn't paying enough attention to notice that it contradicts the current state of the code. If the model were attending carefully, it might reason past the staleness. Instead it half-follows an outdated instruction, producing code that's subtly wrong in ways that look compliant.

Firewall rules don't degrade because someone read them in the wrong order. Terraform policies don't lose authority based on where they sit in a config file. Agent instructions do, because the medium they exist in (a context window with a U-shaped attention curve) actively works against the rules in the middle. And your rules aren't only competing with each other for attention. The agent's own system prompt occupies the highest-attention position in the window, and some of what it says there directly conflicts with what you're trying to tell the agent in your CLAUDE.md. Users have documented how Claude Code's built-in directives ("avoid over-engineering," "keep solutions simple") carry enough positional weight to override detailed architectural instructions that arrive later in the context. Your rules don't just fade in the middle. They're outranked at the top. Two papers published in the same week proposed different fixes for this, and I think both of them solved the wrong layer of the problem. The enforcement machinery they built is impressive. But neither paper asks whether the rules being enforced are still the right rules. Agent governance needs lifecycle management - rules that gain or lose authority based on whether they still match the codebase, and that surface for review when nobody's checked in months - at least as much as it needs better enforcement.

The enforcement gap that ContextCov measured

Over 60,000 GitHub repositories now include agent instruction files. ContextCov sampled 723 of them, parsed every instruction into an AST, and synthesized 46,316 executable checks. 99.997% parsed correctly. When they ran those checks against the repositories that authored the instructions, 81% had at least one violation. Half a million violations total.

The cleverest part of their approach is "path-aware slicing," which preserves the hierarchical context of instructions - a rule that says use pytest means something different under a "Backend Testing" header than under "Frontend Testing." Anyone who's tried to parse a CLAUDE.md programmatically knows why this matters. Instructions are nested documents, not flat lists, and treating them as flat lists is how you end up enforcing a backend rule against your frontend.

Where I disagree is their enforcement philosophy. ContextCov adopts a "fail-closed" approach: ambiguous constraints get interpreted strictly. Use pnpm blocks npm, yarn, and bun globally, even if a human might interpret that more narrowly. False positives can be overridden, they argue, but false negatives accumulate silently. That logic holds when the underlying rules are current. When they're not, fail-closed means the system is aggressively enforcing rules that may have expired months ago.

The paper also sketches an "agentic feedback loop" where agents could propose improvements to instruction files. Their incremental update system supports re-synthesis without full regeneration. But in the current implementation, checks are generated once and run. The feedback loop is a future direction, not a measured result.

Hard enforcement and its limits

The Layered Governance Architecture comes at this from a security-first angle. Four layers: OS-level sandboxing, a judge model that verifies tool-call intent, zero-trust inter-agent authorization, and immutable audit logging. Their benchmark showed 93-98.5% interception for prompt injection and RAG poisoning, with the non-judge layers adding about five milliseconds. The real cost is the judge inference, not the governance infrastructure.

Their argument against "soft constraints" like SOUL.md and CLAUDE.md is specific: enforcement that depends on the LLM's semantic interpretation can be overridden by adversarial input. Hard-coded permission checks at the tool-invocation layer don't have that weakness.

But their own data complicates this. At a 1% attack prevalence - closer to production than a balanced benchmark - the positive predictive value for their best judge drops to 22.7%. The paper says directly that this makes Layer 2 "unsuitable as a silent gatekeeper" and recommends human-in-the-loop escalation. Hard enforcement, in practice, still needs a human deciding what the flags mean.

Kubernetes admission controllers are the closest precedent I know. They started as binary gates and eventually the community built OPA/Gatekeeper on top because teams needed exceptions and audit trails. But even after that evolution, admission policies referencing deprecated API versions kept passing for months - validating code against an API surface that no longer existed - until the deprecated version was removed and everything broke at once. Enforced perfectly, also wrong. Agent-mediated codebases change faster than Kubernetes API surfaces. The same wall is coming sooner.

The question neither paper asks

ContextCov found 500,000 violations. How many of those rules should still have existed?

The paper treats every instruction as ground truth and measures compliance against it. For evaluating enforcement machinery, that makes sense - you need a fixed reference point. But it means the 500,000 number conflates two very different failures: agents drifting from current rules, and agents violating rules that should have been deprecated months ago because nobody maintains them. The paper can't distinguish between the two, and that distinction determines whether enforcement is helping or hurting.

Think about a rules file written nine months ago for a codebase that's since added payment processing and migrated to a completely different database. Some of those rules are still correct. Others will actively mislead an agent into producing code that violates current requirements. ContextCov compiles all of them with the same confidence. LGA would enforce all of them with the same authority.

Enforcing rules that nobody maintains is just automated technical debt.

Enforcement amplifies whatever rules you feed it, and it doesn't know or care whether those rules are current. Good rules produce governance. Stale rules produce something that looks like governance but functions as friction. That friction has a specific cost that unenforced staleness doesn't: it discourages the questioning that would catch the problem. A developer who sees a rule fail-closed assumes the rule is correct and works around it rather than questioning whether the rule still applies. The 81% non-compliance rate in ContextCov's data is bad, but at least those teams know their rules aren't being followed. Enforced staleness looks like compliance. That's harder to diagnose.

There's a subtler version of this problem that shows up when you try to fix the staleness with agent feedback. An agent optimizing for task completion will preferentially flag the constraints that slow it down - and the constraints that impose the most friction are usually the ones protecting real boundaries. I'll come back to this in the fox-guarding-the-henhouse section, because we haven't solved it.

The subsystem boundaries these rules are scoped to don't sit still either. A constraint scoped to /src/api/ is only as useful as the assumption that the API boundary is still at /src/api/. If someone extracts a microservice and half the routes move, the constraint keeps passing, validating rules against code that's no longer relevant, while the code that matters has no constraints at all.

This also complicates ContextCov's path-aware slicing. It solves scoping within a document. The harder problem is scoping between the document and the codebase. A perfectly parsed instruction tree mapped to a directory structure that reorganized last sprint produces correctly scoped constraints aimed at the wrong code.

The fox guarding the henhouse

If agents help manage the rules that govern them, you've built a circular system. An agent that can flag a constraint as stale or propose that a boundary has moved is an agent that can weaken its own governance. The circularity is real, and I don't want to dismiss it.

What tempers my concern is the baseline we're comparing against: 500,000 violations across 723 repos, 81% non-compliance. That's the status quo, where humans maintain rules manually and enforcement runs without feedback. The circularity risk of agent participation has to be weighed against a status quo that is already failing at scale.

The mitigation we've built at Engraph - and this is our operational experience, not published research - is layered visibility with selective escalation. Not everything needs a human in the loop. Most constraint activity is visible but autonomous: agents receive constraints and the system tracks compliance across sessions. What agents can do is flag a rule as potentially stale or propose new constraints based on patterns they observe. What they can't do is redefine the boundaries those constraints are scoped to - subsystem definitions are structural decisions that stay with the humans who own the architecture. The lifecycle itself moves without requiring approval at every step; rules gain or lose authority based on engagement signals. But the high-stakes calls - whether a flagged constraint is genuinely outdated or just inconvenient - still benefit from a human read. The papers have hard numbers; we have early operational data that we're not ready to generalize from yet.

The surfacing-bias problem gets concrete here. The separation between surfacing and deciding assumes agents surface honestly. An agent that repeatedly flags strict constraints as "potentially stale" because they make its job harder isn't lying - it's optimizing for the thing we told it to optimize for. A rule about file organization gets flagged because it makes refactoring tedious. A rule about database migration rollback procedures gets flagged because it doubles the work on every schema change. The system can't distinguish "this rule is annoying" from "this rule is outdated" without human judgment, and we're back to the bottleneck question: is evaluating a flag easier than catching the staleness during code review? For simple constraints, yes. For domain-specific ones, I'm not sure.

There's also a bootstrapping gap. Lifecycle management requires engagement history to function - which rules have been challenged and which are silently ignored. A team adopting constraint management for the first time has none of that signal. Their rules enter the system with equal authority whether they're battle-tested or written on a hunch, and nothing in the lifecycle machinery can tell the difference until agents have been running against them long enough to generate data.

We haven't seen the surfacing-bias failure mode with our customers yet. That might mean the separation works, or it might mean we haven't been looking long enough.

What the papers got right and what comes next

ContextCov proved the scale of the compliance gap - half a million violations across a sample that represents a much larger ecosystem - and their constraint taxonomy maps to how instructions actually function in practice. LGA proved that enforcement infrastructure can run at production latency. Both teams gesture toward lifecycle in their own way, through agentic feedback loops and human-in-the-loop escalation respectively, though neither follows the thread far enough to ask what happens to the medium the rules live in. A CI pipeline runs a check or doesn't - binary, predictable. Agent instruction compliance degrades continuously, shaped by where rules sit in the context window and how long the session has been running. The enforcement papers treat rules as present or absent. In practice, they're present at varying intensities, and the intensity drops for exactly the rules that sit farthest from the model's attentional peaks.

Enforcement without lifecycle produces automated staleness - that much is clear from the data. The less obvious gap is attention-management: even with lifecycle tracking, rules in the middle of the window still fade. The answer probably involves re-injecting constraints at natural intervention points throughout a session rather than dumping them all at the start, combined with some way to track whether rules have been validated against the codebase recently. That's what Engraph does - constraints arrive at session start and again when agents touch specific subsystems, not as a single dump at the top of the window. Whether our particular approach works at scale is something we're measuring and will publish on. But the question the papers opened stays open regardless of our answer: if agent instructions degrade continuously rather than failing discretely, enforcement designed for binary pass/fail is solving a problem shaped differently than the one it's aimed at.

Further reading

- ContextCov: Deriving and Enforcing Executable Constraints from Agent Instruction Files - Compiles agent instructions into executable checks, detecting 500,000+ violations across 723 repos.

- Governance Architecture for Autonomous Agent Systems - Four-layer governance framework with 96% end-to-end interception at production latency.

- Lost in the Middle: How Language Models Use Long Contexts - The U-shaped attention curve: models attend disproportionately to the beginning and end of context.

- On the Impact of

AGENTS.mdFiles on the Efficiency of AI Coding Agents - Empirical study of agent instruction files across 60,000+ repositories.